Teaching

Ten years designing first-of-their-kind graduate courses at NYU Tandon and Tisch School of the Arts. Every course is built around working prototypes, cross-disciplinary teams, and real deadlines. Alumni have won Sports Emmys, led virtual production at major studios, and built creative technology practices of their own.

Flagship Courses

Virtual Production Development

2020 – 2025 · IDM / ITP / Film & Television

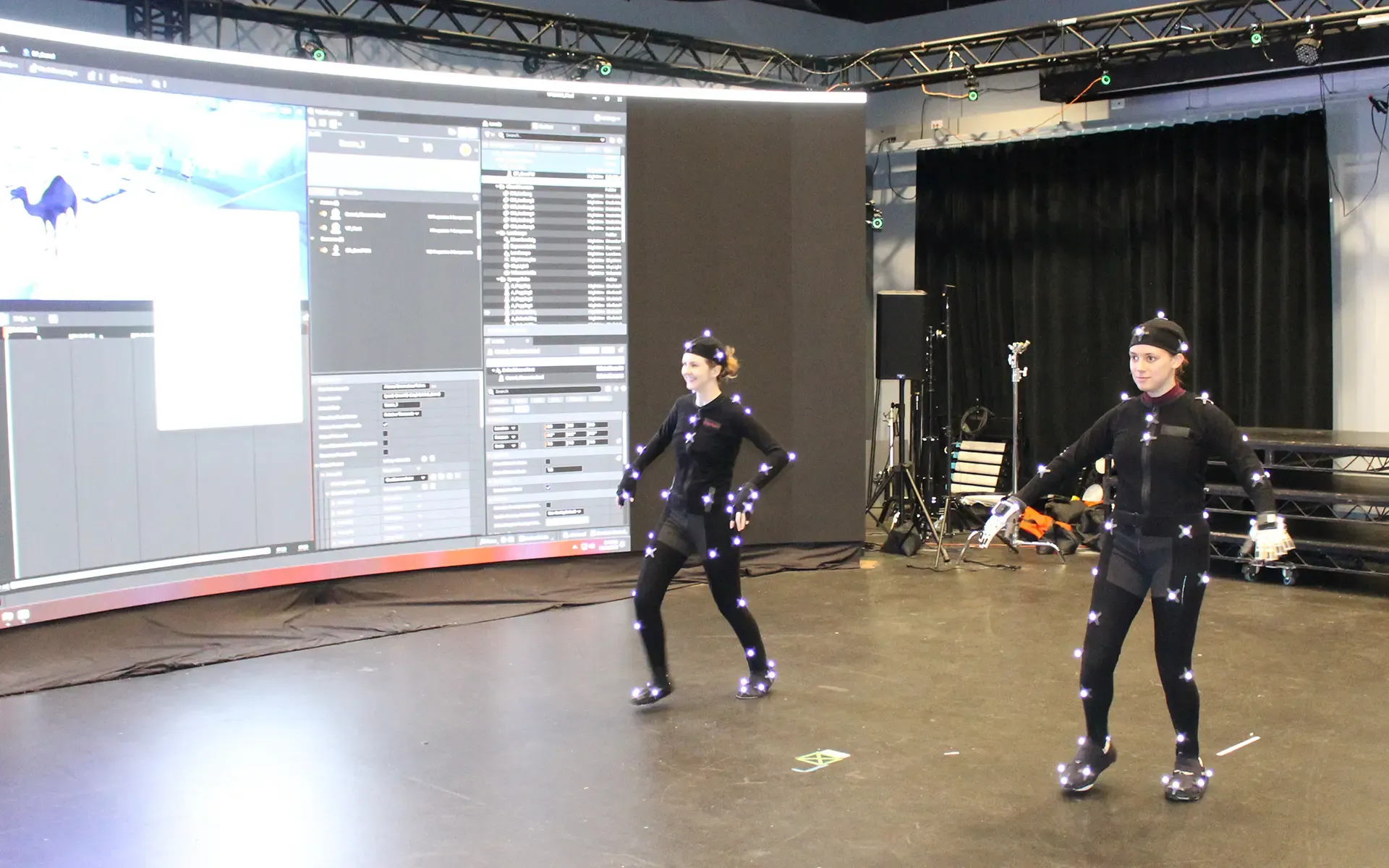

Students prototype short films using live motion capture, performative avatars, and show control in Unreal Engine.

Student Work: 3 images

Virtual Production Development

2020 – 2025 · IDM / ITP / Film & Television

Students prototype short films using live motion capture, performative avatars, and show control in Unreal Engine.

Student Work: 3 images

The flagship course of NYU Tandon’s virtual production program. Cross-disciplinary teams — engineers alongside filmmakers from three NYU schools simultaneously — produce short movies using live motion capture, real-time rendering in Unreal Engine, and synchronized show control systems. Every production decision happens in-engine, in real time.

Initially developed with Academy Award nominee Bradford Young as a co-teacher, the course evolved each year as the technology moved. Early semesters established the fundamentals of in-camera VFX and LED wall workflows. Later iterations integrated AI-assisted pipelines, including an Unreal Engine to ComfyUI style transfer workflow co-developed with Jiri Kilevnik, former Creative Pipeline Director at Runway, and collaborators from Framestore. The final semester incorporated gaussian splatting, AI rendering pipelines, and generative workflows that students used in live production contexts.

Students operate NYU’s OptiTrack motion capture stage, manage real-time retargeting to custom avatars, build Unreal Engine environments, run show control via OSC, and deliver finished short films under production deadlines. The curriculum covers the complete virtual production pipeline from pre-production planning through live capture to post and delivery.

Alumni from this course won three Sports Emmy Awards for the Super Bowl LVIII broadcast on Nickelodeon — building the augmented reality integration using motion capture, Unreal Engine, and tracked cameras for 123.4 million viewers. Created the first-to-market online virtual production workforce development training, sponsored by an Epic Games MegaGrant.

Student Work

Amusement Park Prototyping

2022 – 2025 · Integrated Design & Media

Students design themed attractions from concept through kinetic diorama to fully realized ride simulation in Unreal Engine 5.

Student Work: 1 image · 2 videos

Amusement Park Prototyping

2022 – 2025 · Integrated Design & Media

Students design themed attractions from concept through kinetic diorama to fully realized ride simulation in Unreal Engine 5.

Student Work: 1 image · 2 videos

Tell a story connected to a larger intellectual property in five minutes or less using cyber-physical systems. Students design theme park attractions from scratch — narrative structure, attraction layout, environmental design, animatronics, robotic motion control, multi-sensory components, and real-time show control — then build working prototypes that move, light, project, and respond.

The full technical stack: Unreal Engine 5 for real-time ride-through simulation, physical computing with ESP32 and Arduino for animatronics and sensor integration, Stewart platform motion bases for ride simulation, projection design, Dolby Atmos and multi-channel spatial audio, volumetric video capture, motion capture, photogrammetry, NeRF, digital fabrication, and show control via Max/MSP with OSC and UDP protocols. Students produce both kinetic dioramas with synchronized physical components and complete ride-through simulations in Unreal Engine.

The course covers story structure specific to themed entertainment — how to build tension, deliver payoff, and manage pacing in a physical space where the audience is moving. Students learn attraction layout, queue design, load/unload logistics, and the relationship between physical space and narrative arc.

Guest lecturers include Amy Jupiter (virtual production supervisor, Scholar in Residence at NYU Tandon) and John Huntington (show control systems, author of Show Networks and Control Systems). Co-taught with Scott Fitzgerald. Held at the Brooklyn Navy Yard Building 22 facility. Graduate-level intensive.

Also offered as 1-day workshop for festivals and conferences as well as executive level training

Student Work

Foundation Courses

Bodies in Motion

2015 – 2019 · IDM / ITP

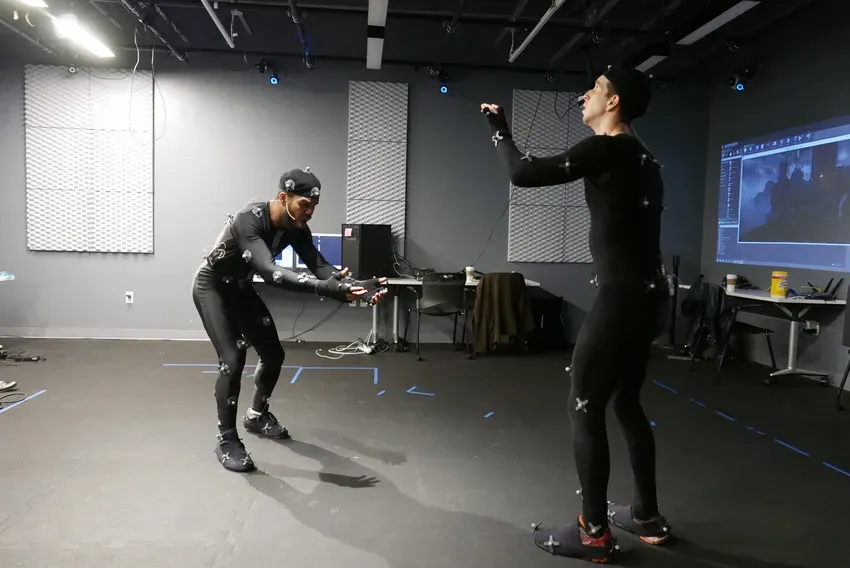

Students learn the complete motion capture pipeline — from calibration through live performance — and present original work in a public showcase.

Student Work: 3 videos

Bodies in Motion

2015 – 2019 · IDM / ITP

Students learn the complete motion capture pipeline — from calibration through live performance — and present original work in a public showcase.

Student Work: 3 videos

A full-pipeline motion capture production course that ends not with a grade but with a public show. Teams of four spend a semester learning to calibrate an OptiTrack system, capture up to four simultaneous performers, clean data in Motive, retarget in MotionBuilder, rig custom characters in Maya, and render in Unreal Engine — then install and perform their work live for an audience.

The course treated motion capture as a performance medium, not just a data acquisition tool. Students studied Labanotation and movement vocabulary to build shared language between technologists and performers. A signature exercise: “Charades with Live Mocap” — skeletons had to reveal character through physical constraints (a body filled with helium, a three-year-old, royalty navigating a dense forest). Groups were named after choreographers — Graham, Bausch, Ono, Brown, Monk — grounding the technology in its artistic lineage.

Technical stack: OptiTrack/Motive, Autodesk Maya, MotionBuilder, Unreal Engine, Max/MSP/Jitter, OSC show control, Spout, virtual camera via iPad. The final weeks taught professional show-running: equipment lists, load-in timelines, tech rehearsal, press kits, teaser trailers, documentation planning, and backup systems for when things break during a live show. Co-taught with Kat Sullivan. Cross-listed across two NYU schools with mandatory public exhibition.

Student Work

Worlds on a Wire

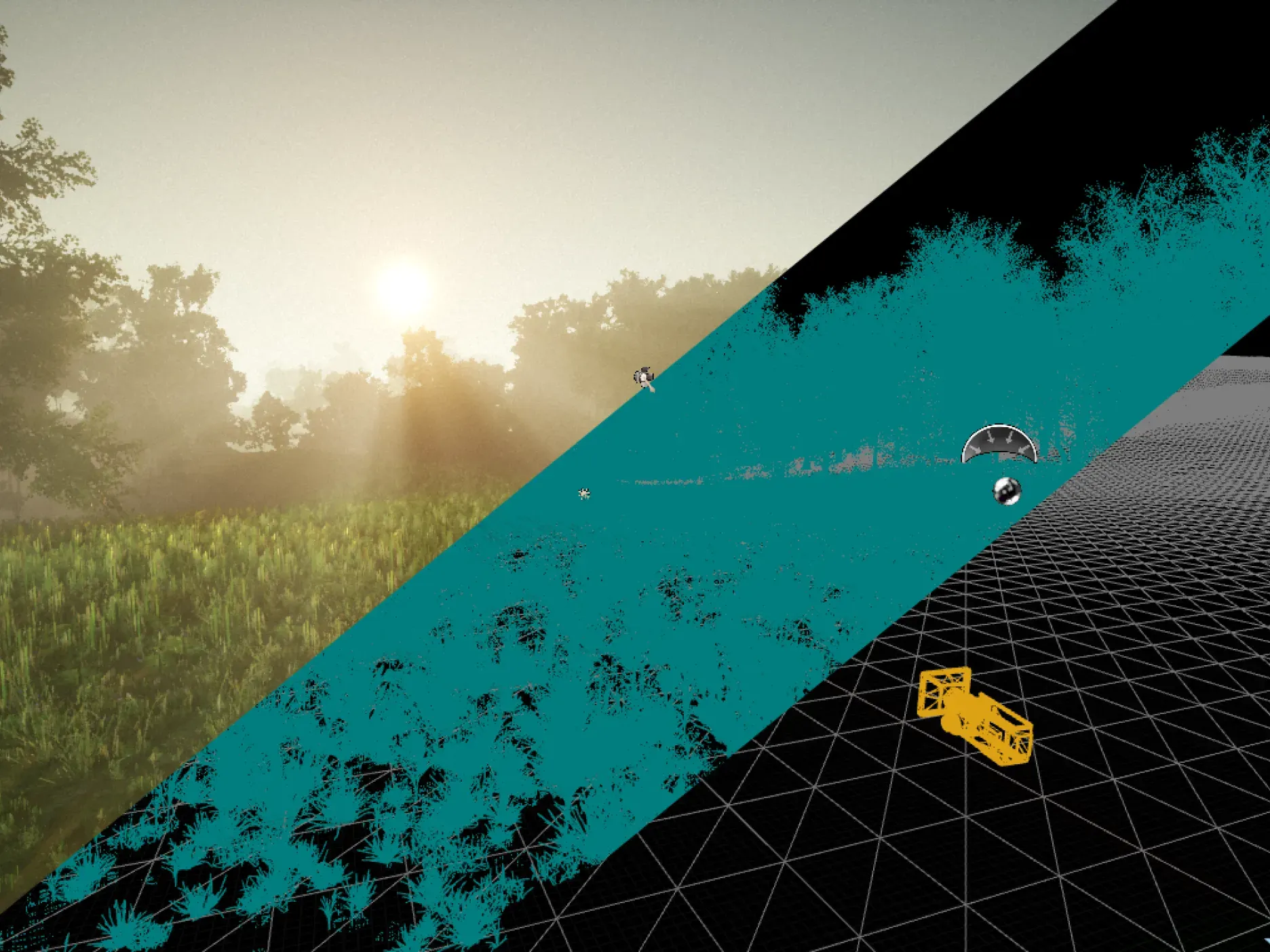

2017 – 2019 · IDM / ITP

Students build narrative VR installations in Unreal Engine paired with physical haptics, fabrication, and show control.

Student Work: 3 images

Worlds on a Wire

2017 – 2019 · IDM / ITP

Students build narrative VR installations in Unreal Engine paired with physical haptics, fabrication, and show control.

Student Work: 3 images

Named after Fassbinder’s 1973 film. The brief: build a narrative VR experience with meaningful physical components — not a headset demo but a spatial installation where the virtual and the tangible are inseparable.

Students worked solo for midterms and in cross-disciplinary teams for finals, moving from mood boards and storyboards through a full production pipeline: environment design in Unreal Engine, character creation via photogrammetry and Mixamo, cinematic sequencing, Blueprint scripting, 360 video integration, and Lidar scanning. The second half of the semester introduced physical computing — OSC show control, Arduino-driven fans and haptic feedback, MIDI via TouchOSC, Subpac bass transducers, and motion tracking through Kinect and Perception Neuron. Every final project had to physically touch the audience, not just visually surround them.

The reading list paired technical VR documentation with critical theory on empathy, embodiment, and the limits of immersion. Students were required to build project websites, create exhibition posters, and prepare festival submissions — learning to get work into the world, not just onto a hard drive. Cross-listed across two NYU schools with separate sessions running simultaneously.

Student Work

Canvas of Light and Shadow

2025 · Integrated Design & Media

Curriculum designer and guest lecturer for a site-specific architectural projection mapping course culminating in work projected onto Brooklyn Navy Yard buildings.

Student Work: 1 image · 2 videos

Canvas of Light and Shadow

2025 · Integrated Design & Media

Curriculum designer and guest lecturer for a site-specific architectural projection mapping course culminating in work projected onto Brooklyn Navy Yard buildings.

Student Work: 1 image · 2 videos

Designed the curriculum for a seven-week intensive in architectural projection mapping, combining photorealistic rendering in Unreal Engine 5.4 with media server workflows in TouchDesigner. Taught by Louise Lessel as primary instructor with guest lectures by Todd Bryant.

The course moves from projection masking on objects through motion graphics, material design, previsualization in Unreal Engine, pixel mapping real buildings, site-specific narrative development, and rendering for media server playback via HAP and Notch codecs. Students use KantanMapper in TouchDesigner for projection alignment and Lidar scanning for spatial capture of architectural surfaces. Required reading includes Eleanor Fuchs’ Small Planet — grounding the technical work in dramaturgical thinking about how to read and inhabit a world.

Students deliver a 60-second narrative-driven projection piece mapped onto a building in the Brooklyn Navy Yard. The course represents a direct translation of Todd’s decade of professional projection mapping work — from LUMA Festival and VIVID Sydney through the Norwegian Cruise Line dome and the IFP permanent installation — into a structured curriculum others can teach.

Student Work

Professional Development

Virtual Production 4-Course Bundle

2020 – 2022 · Professional Education

First-to-market online virtual production workforce training for working professionals, developed with Epic Games MegaGrant funding. Sold out eight times.

Promotional Material: 3 images

Virtual Production 4-Course Bundle

2020 – 2022 · Professional Education

First-to-market online virtual production workforce training for working professionals, developed with Epic Games MegaGrant funding. Sold out eight times.

Promotional Material: 3 images

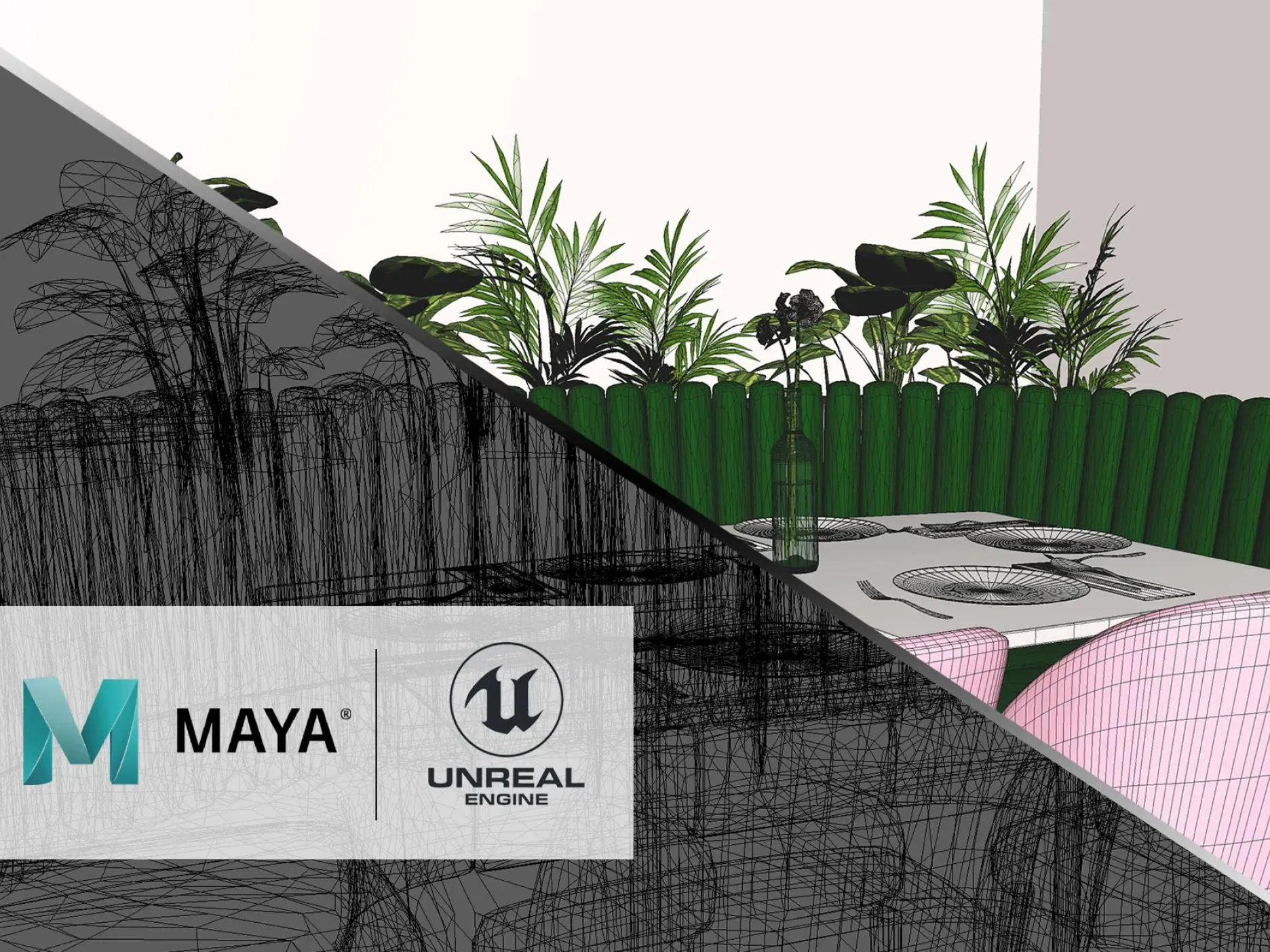

Designed the complete curriculum for a four-course professional certificate program in virtual production, funded by an Epic Games MegaGrant. The bundle — 3D Asset Creation and Workflows, World Building and Natural Aesthetics, Mixed Reality Filmmaking, and Remote Collaboration and Content Creation — was the first online virtual production training to reach market, before the industry had standardized any training pathways.

Directly taught Remote Collaboration and Content Creation in Unreal Engine, covering virtual production tools and techniques for distributed teams. Designed the curriculum and learning architecture for all four courses, structuring them as a cohort-based sequence that working professionals could complete alongside full-time jobs. Almost entirely asynchronous with optional live sessions and office hours.

The program sold out eight consecutive cohorts and established NYU Tandon as a leader in virtual production workforce development at a moment when studios were scrambling to staff LED volumes and real-time pipelines faster than talent existed.

Promotional Material

Alumni Outcomes

- Three Sports Emmy Awards for Super Bowl LVIII broadcast on Nickelodeon — augmented reality integration using motion capture, Unreal Engine, and tracked cameras for 123.4 million viewers.

- NYU Tandon IDM ranked #4 nationally for AR/VR education, two consecutive years.

- Alumni across virtual production studios, creative technology agencies, and immersive entertainment companies worldwide.